· Models · 8 min read

Quantum RFM: the customer as a state system

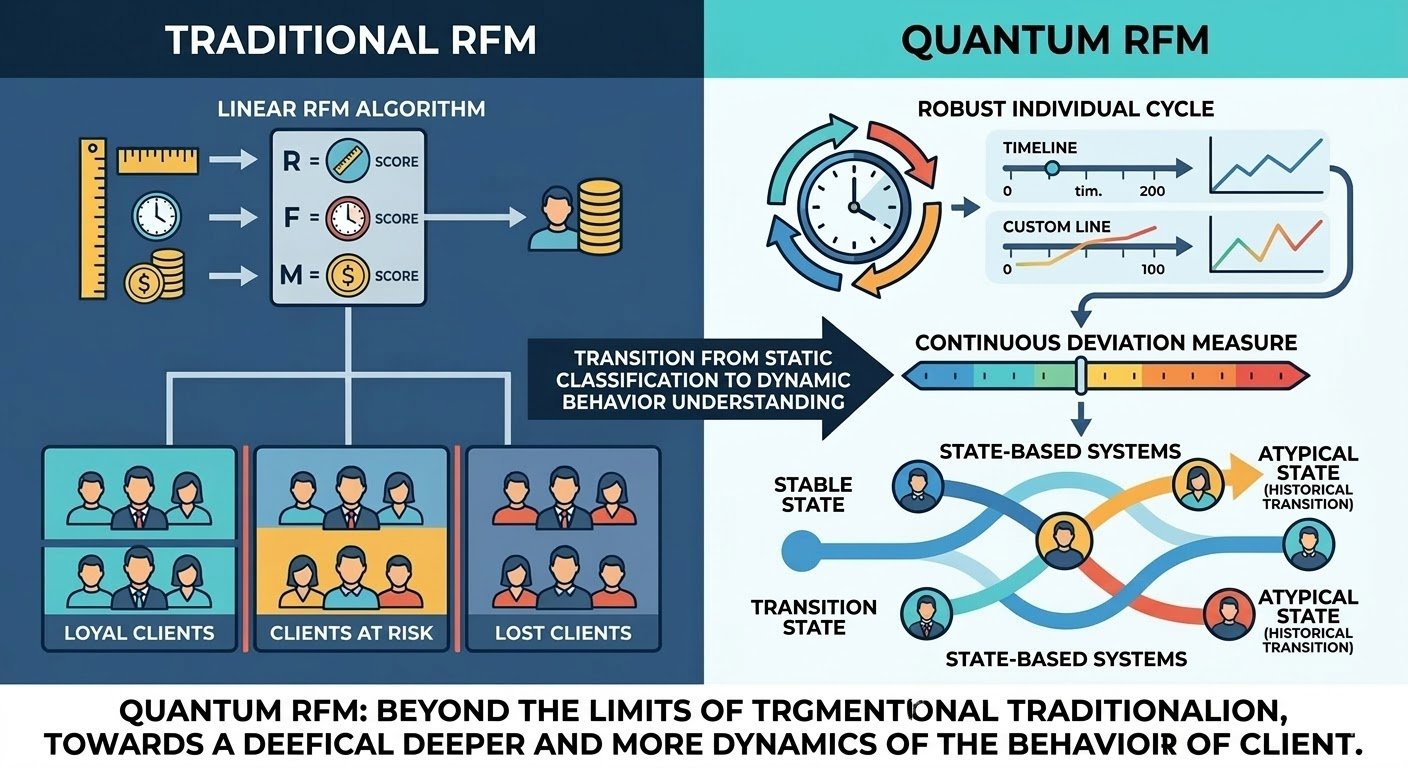

The limits of traditional RFM segmentation and an interpretive framework that treats the customer as a dynamic system, not a static object to be classified.

The limits of traditional RFM segmentation

The traditional RFM model emerges in contexts where the repetition of behaviour is a reasonable assumption. Recency is treated as an absolute measure, frequency as a relatively stable property, value as an economic synthesis. The customer is described as an entity to which scores are assigned, on the basis of common thresholds that should hold for the entire customer base.

In many real contexts, especially B2B, this approach quickly shows its limits. Customers with very different behaviours end up in the same class, while similar customers are separated only because they are passing through different temporal phases. The result is a segmentation that looks orderly but loses contact with the concrete meaning of the behaviours observed.

Two customers silent for thirty days can be in completely different situations. For one it is a physiological pause consistent with their history; for the other it is a meaningful signal of discontinuity. Treating them as equivalent means giving up on interpreting the behaviour and replacing it with a convenient but uninformative classification.

The limit of traditional RFM is conceptual before it is operational: time is treated as an absolute quantity and the customer as a static object, while real behaviour is dynamic, situated, and strongly dependent on individual history.

The customer as a state system

In a transactional system the customer is not directly observable. What we observe is a discrete sequence of events: orders, interactions, periods of absence. The customer emerges as a structure reconstructed from this sequence.

Thinking of the customer as a state system means recognising that their current state has meaning only in relation to previous states. Each observation is situated inside a dynamic and describes where the customer is with respect to their own history, more than who they are in absolute terms.

In this perspective the customer coincides with the behaviour that emerges from the observed sequence, and every new event modifies the configuration of the system. Each new order is a state transition that modifies the system itself, and the model limits itself to observing it.

This approach adheres to the way data exists and is produced, and avoids projecting onto the customer a stability that has never been directly observed.

The problem of absolute recency

Recency is often used as an objective and intuitive measure. The time elapsed since the last order seems to provide an immediate indication of the state of the relationship, which is why it is adopted as a primary signal in many models.

Without a reference, however, this measure is ambiguous. Time does not have the same meaning for all customers, nor within the same customer at different moments. Thirty days can represent a normal pause in one context and a meaningful deviation in another.

Without an individual reference, absolute recency mixes profoundly different situations and produces signals that are hard to interpret. The same temporal observation can indicate stability, risk, or simple noise depending on the customer’s history.

The problem is not solved by refining thresholds or multiplying classes. As long as time stays absolute, the measure stays disconnected from the structure of the observed behaviour. What changes, in Quantum RFM, is the way time is interpreted.

The individual cycle as an empirical reference

Every customer manifests over time a certain regularity or irregularity in reordering. This regularity is an empirical fact observable with different intensities depending on the case, not an assumption of the model.

The individual cycle emerges from the distribution of intervals between events, a property the model reads in the existing data without imposing predefined thresholds. Estimating it through median and percentiles means accepting that real behaviour is often asymmetric, discontinuous, and influenced by contingent factors.

An example makes the practical sense of this choice readable. A Milanese wine shop with continuous sales orders on average every 21 days; its third quartile sits at 36. When it reaches the thirty-fourth day of silence, the universal threshold of 60 days continues to consider it active and no alert fires, while the individual-cycle reading signals that the customer is running 60% above its usual rhythm and is at the edge of its physiological band. That is the moment a careful sales rep picks up the phone, when the signal is still fresh.

A Roman wine bar with slow rotation, in the same system, orders on average every 84 days with a first quartile at 60. When observed at the sixty-first day of silence, the universal threshold classifies it as dormant and triggers a reactivation sequence, while the individual-cycle reading places it at the floor of its normal behaviour, with the next order statistically expected in 3 to 6 weeks. Disturbing it in that moment is noise, useless at best.

The two cases show the same dynamic read from opposite angles. The universal threshold is wrong on both sides, and produces in symmetrical fashion false reassurances and false alarms. The problem is not solved by choosing a better threshold, because any shared threshold inherits the same blindness.

The choice of robust measures is a consequence of the nature of the observed data, more than a statistical refinement. The mean in these contexts tends to be unstable and poorly representative, while percentiles offer a more coherent reference.

The individual cycle is a historical equilibrium point that allows time elapsed since the last event to be made sense of. Its quantification measures behaviour that has already happened, and remains silent on future behaviour.

A continuous measure of deviation

Once an individual reference is defined, the customer’s current state can be read as a deviation from their own historical equilibrium. Quantum RFM introduces a continuous measure that describes this relationship directly.

It is a local measure: it serves to observe the same customer over time while keeping the context of their history, and loses sense if used to compare different customers. It says where the system is with respect to what has been normal for it, without claiming to say whether it is doing well or badly in absolute terms.

The continuity of the measure is central. Discrete classifications are an operational necessity but do not describe the phenomenon: reducing a continuous dynamic to a class too early loses information.

Real distributions and choices of robustness

Observed behaviours rarely follow regular distributions. They are often concentrated in specific intervals, interrupted by periods of inactivity, or marked by exceptional events that temporarily alter the rhythm.

In this context, the use of the mean or of models that assume normality introduces systematic distortions. Outliers belong to the phenomenon we want to describe, and the model must be able to accommodate them as part of the distribution rather than eliminate them as noise.

Quantum RFM adopts robust measures because it seeks a stable representation of real behaviour. Robustness is a methodological choice that privileges interpretive coherence over formal precision, and the simplicity of the metrics is a necessary condition to make the model readable and usable in a conscious way.

When the concept of cycle stops being informative

Not all customers display behaviour regular enough to be summarised in a cycle. Some act episodically, others concentrate orders in narrow windows, others still alternate phases with completely different logics.

Quantum RFM explicitly recognises this limit through a measure of consistency that does not modify the estimated cycle but qualifies the trust we can place in it. The cycle stays the same; what changes is the weight we attribute to it.

Consistency signals when the model is operating on a fragile base and when, instead, the historical reference is sufficiently stable. It remains a tool for reading the same population of customers, and leaves the operational classification unchanged.

In these cases the model makes explicit an epistemic limit, a boundary beyond which its reading does not offer sufficient guarantees to act. Consistency then becomes a parameter of risk management internal to the model itself, and signals where human intervention remains necessary.

States as a tool for operational reading

To be usable, a continuous measure must be translated into an operational language. The states of Quantum RFM respond to this need without becoming identity categories.

They are reading tools that describe the moment in which the system is with respect to its own historical behaviour. They remain operational and revocable: recalibrating them as the model evolves is part of the work, while labelling them as the customer’s identity freezes them in a way the phenomenon does not tolerate.

A state makes available information usable without exhausting it. It serves to orient attention and make action possible, while keeping the complexity of the underlying phenomenon intact.

Operational use of the model

Quantum RFM was born to provide a coherent reading of behaviour over time and reduce the risk of out-of-context interventions. Higher ambitions, from predicting the next order to automating the entire customer relationship, belong to other families of models and other intervention philosophies.

The introduction of consistency makes explicit a principle that is often implicit: not all situations are equally automatable. When information is fragile the model signals the need for caution.

In this sense Quantum RFM is a decision-support tool that always remains subordinate to the judgement of those who use it. It offers an interpretive structure that helps decide when to act, when to wait, and when to put human judgement back at the centre.

Concluding remarks

Quantum RFM is an interpretive framework before being an operational model. It is born from the need to read customer behaviour without imposing artificial regularities or arbitrary thresholds.

Treating the customer as a state system means accepting that behaviour is dynamic, dependent on history, often irregular. The model tries to make this complexity readable and usable, leaving it intact in its varied character.

What it offers is a conceptual structure to observe better, decide with greater coherence, and recognise when automation reaches its own limits.